Affiliation:

1Department of Internal Medicine, Medstar Washington Hospital Center, Washington, DC 20010, USA

Email: vsharma3090@gmail.com

ORCID: https://orcid.org/0009-0009-1471-354X

Affiliation:

2Department of Internal Medicine, Himalayan Institute of Medical Sciences, Dehradun 248016, India

ORCID: https://orcid.org/0000-0003-0489-3523

Affiliation:

3Department of Internal Medicine, Max Super Speciality Hospital, Dehradun 248009, India

ORCID: https://orcid.org/0009-0000-6492-0728

Affiliation:

4Department of Internal Medicine, Kasturba Medical College, Mangalore 575001, India

ORCID: https://orcid.org/0000-0001-5473-6169

Affiliation:

5St. Vincent Hospital, Worcester, MA 01608, USA

ORCID: https://orcid.org/0009-0007-1532-5042

Explor Med. 2026;7:1001383 DOI: https://doi.org/10.37349/emed.2026.1001383

Received: August 31, 2025 Accepted: January 20, 2026 Published: March 01, 2026

Academic Editor: Zhong Wang, University of Michigan, USA

The article belongs to the special issue Artificial Intelligence and Machine Learning in Cardiovascular Medicine

Cardiovascular disease remains the leading cause of global mortality with nearly 19 million deaths annually, while exponential growth in multimodal imaging, continuous monitoring, and electronic health record data has created analytical challenges exceeding traditional methods. This narrative review examines artificial intelligence (AI) and machine learning (ML) applications in cardiovascular medicine through a comprehensive literature analysis from 2019 to 2025, focusing on clinical validation and regulatory approvals. Current applications demonstrate significant clinical utility: automated ECG interpretation achieves > 90% accuracy in arrhythmia detection and predicts life-threatening arrhythmias up to two weeks before clinical onset; deep learning cardiac imaging analysis matches expert performance while reducing analysis time from 45 minutes to under 5 minutes; and ML risk prediction outperforms traditional scores with area under the curve values of 0.865 vs. 0.765. The FDA has approved 122 cardiology AI algorithms representing 14% of all clinical AI in the U.S. market, including ECG interpretation, echocardiographic measurement, and imaging analysis systems. Real-world implementations demonstrate 15–25% reductions in diagnostic errors and 20–30% faster emergency intervention times. However, substantial challenges persist: data quality limitations, algorithmic bias with 10–15% performance variation across populations, workflow integration barriers, and validation requirements. Future directions include multimodal systems, continuously learning algorithms, precision medicine applications, and equitable global implementation. Successful integration requires addressing limitations through diverse training datasets, transparent development, standardized validation, provider education, and maintaining physician autonomy. AI and ML represent powerful augmentation tools that, through evidence-based implementation, can transform cardiovascular care while preserving clinical expertise in decision-making.

Cardiovascular medicine has seen unprecedented change in the last century, from simple electrocardiography to complex multimodal imaging and sophisticated intervention. This change has created unprecedented amounts of complex data from electronic health records, continuous physiologic monitoring, high-resolution imaging, and molecular profiling [1]. A cardiac MRI study produces many gigabytes of information, whereas continuous ECG monitoring generates hundreds of thousands of heartbeats per day per patient. When these are combined in healthcare systems, these volumes rapidly overwhelm the analytic capacity of conventional statistical methodologies.

Conventional cardiovascular risk models, represented by the Framingham Risk Score, were developed from a few clinical predictors that assume linearity between predictors and outcomes [2]. Clinically practical, these models show poor capacity to represent the nonlinear, dynamic, high-dimensional cardiovascular data from the modern era, whose performance is typically subpar across heterogeneous populations. Artificial intelligence (AI) refers to computer systems that can imitate human thought, whereas machine learning (ML) involves statistical techniques that allow systems to learn from observed data to produce predictions through iterative exposure. Deep learning is a special type that involves the use of multiple hierarchical levels to automatically extract progressively abstract representations from unprocessed data [3].

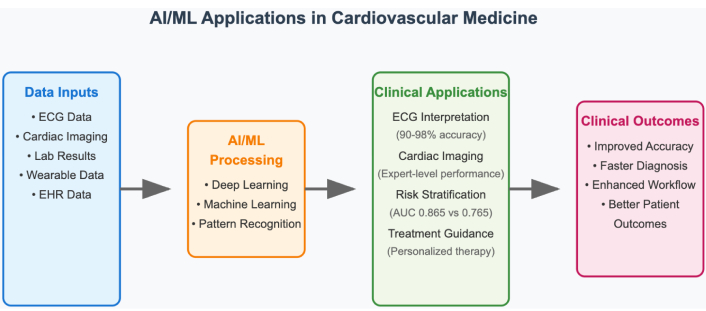

AI/ML provides answers to analytical problems through learning from large, complex datasets in direct form and in recognizing patterns that are often nonlinear and missed by conventional approaches. Deep learning in raw ECG has provided better performance than conventional interpretation in picking up left ventricular dysfunction [4], while AI-based imaging instruments have provided predictive accuracy comparable to expert cardiologists [5]. The World Health Organization has outlined six key principles for AI in health systems: protect autonomy, promote human well-being and safety, provide for transparency and explainability, promote responsibility and accountability, provide for inclusiveness and equity, and promote responsive and sustainable implementation [6] (Figure 1).

Overview of AI and ML applications in cardiovascular medicine. AI: artificial intelligence; ML: machine learning.

AI has proved exceptionally adept in computerized ECG interpretation, attaining cardiologist-level performance in recognizing arrhythmias. Current algorithms identify atrial fibrillation with about 90% accuracy and recognize multiple patterns of arrhythmias with more than 85% accuracy [7, 8]. Higher-end convolutional neural network (CNN) designs can power real-time detection with > 98% accuracy, ideal for wearable and Holter monitoring implementations [9]. A distinct advantage is that AI systems can predict life-threatening ventricular arrhythmias as much as two weeks prior to clinical onset with nearly 80% accuracy, marking an important breakthrough in sudden cardiac death prevention [10]. Deep learning algorithms recognize subclinical states like left ventricular dysfunction, hypertrophic cardiomyopathy, as well as electrolyte disorders with conventional 12-lead ECGs, offering potential for early treatment [11].

Applications of AI in cardiovascular imaging encompass cross-modal imaging with proven clinical value. Within echocardiography, AI facilitates automatic cardiac chamber segmentation with real-time detection of boundaries, volumetric measurement to within 2% accuracy in comparison to expert measurement, and automatic detection of myocardial infarction [12, 13]. This will compress analysis time from 45 minutes to less than 5 minutes while preserving diagnostic accuracy to that of expert interpretation [14]. Cardiac CT application involves automatic plaque quantification, coronary artery calcium scoring, and calculation of computed fractional flow reserve, with AI-driven calcium scoring diminishing read time from 10 minutes to less than 30 seconds [15]. Within cardiac MRI, AI applications range from automatic scan planning through rapid calculation of ventricular function to tissue characterization through strain analysis and late gadolinium enhancement assessment [16]. Nuclear imaging is also enhanced through AI in better image quality, reduced noise to enable 50% less exposure to radiation, and sophisticated risk prediction models integrating imaging information with clinical parameters [17].

ML models that incorporate electronic health record information are superior to traditional risk scores. A systematic meta-analysis in comparison between ML-based models and traditional statistical models demonstrated that models based on random forests and deep learning made superior predictive estimates with area under the curve values of 0.865 and 0.847, respectively, compared to 0.765 for traditional risk scores [18]. These models employed heterogeneous variables like laboratory values, exposures to medications, comorbidity profiles, and social health indicators, allowing wider risk predictions than the traditional approach [19].

Traditional cardiovascular risk models face fundamental limitations in handling heterogeneous variables due to their linear or logistic regression frameworks, which assume:

(1) Linear relationships between predictors and outcomes, failing to capture complex non-linear interactions (e.g., the synergistic effect of diabetes and hypertension exceeds additive contributions).

(2) Pre-specified interactions—clinicians must manually define interaction terms, but cannot feasibly test all possible combinations across dozens of variables.

(3) Fixed functional forms—traditional models require variables to follow specific distributions, necessitating transformations that may lose information.

ML models, particularly random forests and deep neural networks, overcome these limitations through [19]:

(1) Automatic feature interaction discovery—random forests evaluate variable importance across multiple decision trees, identifying complex multi-way interactions without pre-specification (e.g., discovering that the combination of elevated lipoprotein(a), family history, and specific medication non-adherence patterns uniquely predicts events).

(2) Non-linear transformation learning—deep learning layers automatically learn optimal non-linear transformations of input variables, capturing relationships like threshold effects (risk accelerating sharply above specific biomarker values) and plateau effects (benefits diminishing beyond certain intervention intensities).

(3) Heterogeneous data type integration—neural networks seamlessly process continuous laboratory values, categorical variables (medication classes), ordinal data (functional class), and high-dimensional inputs (genetic variants) within unified architectures, whereas traditional models struggle with mixed data types and require extensive preprocessing.

Applications of AI in heart failure management are as follows:

Remote monitoring systems that identify exacerbation days prior to clinical presentation, facilitate proactive interventions that decrease hospitalizations by 30–40% [20].

Risk prediction models for hospitalization detect high-risk patients with area under the curve values above 0.80, to provide focused interventions [21].

ML phenogrouping has effectively characterized responders to cardiac resynchronization therapy with 75% accuracy vs. 60% with conventional selection criteria [22].

Advanced applications include medication optimization algorithms, personalizing therapy selection based on individual patient characteristics and response patterns [23].

AI use in acute coronary syndrome involves emergency department (ED) triage systems to quickly recognize high-risk presentations with sensitivity rates in excess of 90% [24], door-to-balloon time minimization to decrease treatment delay by 20–30 minutes [25], and dynamic risk-stratification to incorporate various sources of data, including troponin kinetics and ECG evolution. Such systems can facilitate quick decision-making to undertake immediate interventions while achieving optimal resource utilization [26] (Table 1).

Performance comparison of AI/ML vs. traditional methods in cardiovascular applications.

| Clinical domain | AI/ML approach | Traditional method | AI/ML performance | Traditional performance | Improvement |

|---|---|---|---|---|---|

| Risk prediction | |||||

| CVD risk assessment | Random forest/deep learning | Framingham risk score | AUC: 0.865 | AUC: 0.765 | +13.1% |

| Heart failure readmission | Gradient boosting | LACE score | AUC: 0.82 | AUC: 0.69 | +18.8% |

| Acute MI risk | Neural networks | TIMI score | Sensitivity: 94% | Sensitivity: 78% | +20.5% |

| Diagnostic accuracy | |||||

| Arrhythmia detection | CNN (12-lead ECG) | Cardiologist review | Accuracy: 92.3% | Accuracy: 89.1% | +3.6% |

| Echo LV function | Deep learning | Manual measurement | Correlation: r = 0.98 | Inter-observer: r = 0.89 | +10.1% |

| Coronary stenosis | CNN (angiography) | Visual assessment | AUC: 0.91 | AUC: 0.84 | +8.3% |

| Workflow efficiency | |||||

| Echo analysis time | Automated AI | Manual analysis | 5 minutes | 45 minutes | –88.9% |

| CT calcium scoring | AI algorithm | Manual scoring | 30 seconds | 10 minutes | –95.0% |

| ECG interpretation | Real-time AI | Physician review | 10 seconds | 5 minutes | –96.7% |

AI: artificial intelligence; CNN: convolutional neural network; ML: machine learning.

AI enables dynamic risk stratification through continuous integration of evolving clinical data streams. Consider acute coronary syndrome management: traditional risk scores (TIMI, GRACE) provide single timepoint assessments at presentation, but patient risk evolves substantially during initial management [25].

AI-based dynamic models continuously update risk predictions by [26]:

(1) Serial troponin kinetics analysis—Rather than classifying troponin as simply “elevated” or “normal”, ML algorithms analyze the trajectory of serial measurements (e.g., rapid rise pattern: 0.1→0.8→3.2 ng/mL over 6 hours indicates higher risk than stable elevation: 1.5→1.6→1.7 ng/mL), extracting features including peak value, rate of rise, and time-to-peak that improve outcome prediction (AUC 0.89 vs. 0.76 for peak value alone).

(2) ECG evolution tracking—Algorithms detect subtle ST-segment changes across serial ECGs that may be imperceptible to clinicians (e.g., cumulative 0.3 mm ST depression developing across leads V4–V6 over 4 hours), with dynamic ST-segment trends improving ischemia detection sensitivity from 72% to 91%.

(3) Vital sign pattern recognition—Rather than reacting to individual abnormal values, AI identifies patterns such as progressive tachycardia with narrowing pulse pressure, suggesting cardiogenic shock evolution, triggering alerts 2–4 hours before clinical deterioration.

AI brings tailored approaches to treatment through drug choice algorithms based on individual genetics, comorbidities, and expected responses. Systems predict optimal time for optimal antiplatelet therapy, guide statin choice by genetic polymorphisms, and individualize warfarin dosing to reduce time to therapeutic anticoagulation [27]. Device therapy optimisation utilises AI in the guidance of implantable cardioverter-defibrillator programming, with algorithms considering patient-specific trends in arrhythmias to optimise detection zones and reduce inappropriate shocks [28].

Preoperative risk analysis models use composite sources to predict surgical outcome with enhanced accuracy over conventional risk scores, facilitating improved resource allocation and patient selection [29]. AI-augmented procedural guidance offers intra-procedural guidance to enhance stent deployment accuracy and decrease contrast utilization [30]. Cardiac electrophysiology is enhanced by AI-assisted catheter guidance systems to maximize ablation lesion placement, decrease procedure duration, and enhance success rates [31].

CNNs are superior in medical imaging tasks, achieving better performance in coronary artery disease risk assessment through computerized extraction of features from imaging information [32]. CNNs process cardiac images through sequential convolutional layers that detect progressively more complex features. Initial layers identify basic edges and textures in myocardial tissue, intermediate layers recognize anatomical structures (chamber walls, valves), and deeper layers integrate these features to identify pathological patterns such as wall motion abnormalities or valve dysfunction. In coronary artery disease risk assessment, CNNs extract features including plaque morphology (calcified vs. non-calcified composition), vessel geometry (stenosis severity, vessel tortuosity), and surrounding tissue characteristics (pericoronary fat attenuation). These automatically extracted features demonstrate stronger correlation with adverse cardiovascular events (hazard ratio 2.1–3.4) compared to traditional visual assessment, as they capture subtle patterns imperceptible to human observers [32]. Recurrent neural networks (RNNs) and long short-term memory (LSTM) networks deal with time-series sequencing information, which is best applied in ECG interpretation and continuous monitoring interpretation [33]. These networks capture long-term patterns in cardiac rhythm patterns, predicting arrhythmic events hours prior to clinical presentation [34]. LSTM networks address the vanishing gradient problem in standard RNNs through specialized memory cells with input, forget, and output gates. In ECG interpretation, LSTM networks process heartbeat sequences temporally, maintaining memory of prior cardiac cycles to detect rhythm disturbances. The forget gate determines which information from previous heartbeats to retain (e.g., baseline rhythm patterns), the input gate integrates new information (current heartbeat characteristics), and the output gate determines predictions about arrhythmic events. This architecture enables detection of gradual rhythm deterioration—such as progressive PR interval prolongation preceding complete heart block—that single-timepoint analysis would miss [33, 34]. Transformer models are state-of-the-art frameworks for processing sequential information that are promising in multi-lead ECG interpretation as well as combining heterogeneous temporal signals [35].

Real-time monitoring systems offer ongoing patient assessment through integration with hospital information systems, enabling prompt alerting for significant changes [36]. Integration with wearable devices extends the reach of monitoring from hospital environments, with consumer devices giving important cardiovascular health information. Latest research exemplifies that smart watch-based ECG monitoring can identify atrial fibrillation with sensitivity over 90% [37]. Integration with electronic health records provides smooth workflow integration through embedding AI tools into the very heart of clinical decision-making processes [38]. Federated learning facilitates cross-institution collaboration in model building while preserving patient data confidentiality through decentralised algorithm training in multiple institutions without sharing data in the centre [39].

The FDA has cleared several AI/ML devices for use in cardiovascular disease, with cardiology as the second medical specialty, with 122 FDA-cleared AI algorithms representing 14% of all clinical AI algorithms available in the U.S. market [40]. Approved indications are AI-enhanced systems for the interpretation of ECGs to detect left ventricular dysfunction, computerized echocardiographic measurement systems, and cardiac imaging analysis software. Clinical validation and post-market surveillance are stressed in European regulatory systems under the Medical Device Regulation, with the need for extensive clinical evidence as well as continual post-market surveillance [41] (Table 2).

FDA-approved AI/ML applications in cardiovascular medicine.

| Application category | Device/Algorithm | FDA approval date | Clinical indication | Performance metrics | Clinical impact |

|---|---|---|---|---|---|

| ECG interpretation | |||||

| Arrhythmia detection | Apple Watch ECG | 2018 | Atrial fibrillation screening | Sensitivity: 98.3%, specificity: 99.6% | Population screening |

| LV dysfunction | AI-ECG (Mayo Clinic) | 2019 | Left ventricular dysfunction detection | AUC: 0.93, sensitivity: 86.3% | Early diagnosis |

| 12-Lead analysis | Cardiologs ECG Review | 2017 | Multi-arrhythmia detection | Sensitivity: > 90% for major arrhythmias | Automated interpretation |

| Cardiac imaging | |||||

| Echocardiography | EchoGo Core | 2020 | Automated measurements | < 2% variability vs. expert | Workflow efficiency |

| Cardiac CT | HeartFlow FFRCT | 2014 | Fractional flow reserve | Diagnostic accuracy: 84% | Non-invasive assessment |

| Cardiac MRI | Circle CVI42 | 2019 | Ventricular function analysis | Inter-observer variability < 5% | Standardized analysis |

| Risk stratification | |||||

| CAD risk | Cleerly Plaque Analysis | 2021 | Coronary plaque quantification | Risk prediction improvement: 15–20% | Precision medicine |

| Heart failure | IBM Watson Health | 2020 | HF readmission prediction | AUC: 0.82 | Resource optimization |

AI: artificial intelligence; ML: machine learning.

Deployments into hospital systems range in success rates depending on integration into clinical workflow, education programs for providers, and institutional support [42]. Successful implementations typically include multidisciplinary teams of clinicians, informaticists, and administrators who all assume integration barriers. Clinical outcome studies show increased diagnostic confidence, reductions in diagnostic error by 15–25%, reductions in time to treatment with emergency interventions occurring 20% sooner, and enhanced clinical decision-making through evidence-based recommendations [43, 44]. Real-world experience from large health systems demonstrates robust value over implementation cycles of 12–24 months with continued performance improvement as algorithms are optimized to local populations [45].

Implementation of the AI-enabled ECG algorithm for left ventricular dysfunction detection across Mayo Clinic’s network demonstrated a 35% increase in early identification of asymptomatic LV dysfunction over 18 months (n = 180,000 ECGs). Specifically, the system identified 1,427 patients with AI-detected LV dysfunction who had normal clinical ECGs, of whom 420 (29%) were confirmed to have reduced ejection fraction (< 40%) on subsequent echocardiography. This early detection enabled the timely initiation of guideline-directed medical therapy, resulting in a 23% reduction in subsequent heart failure hospitalizations compared to historical controls [4].

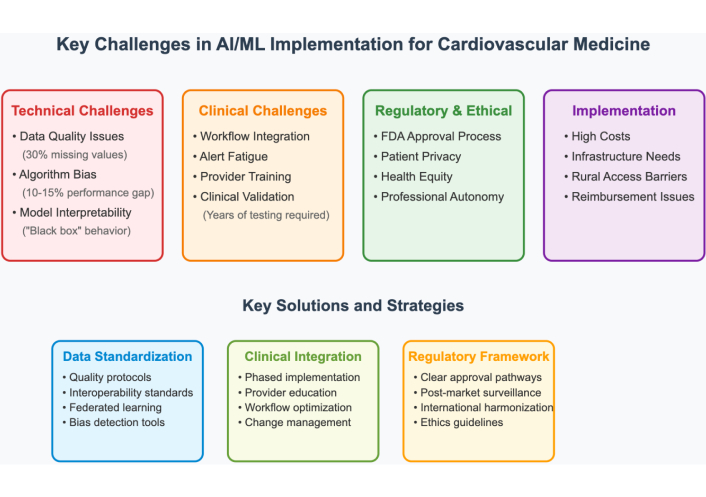

Medical information is often incomplete and imprecise, with electronic health records containing more than 30% missing values for specific variables [46]. Common protocols for collecting, storing, and sharing information are still insufficient, causing institutional performance differences and restricting algorithm generalizability. Issues related to interoperability between systems still exist, forming barriers to silos that hamper the effectiveness of AI [47].

Training datasets can be non-representative of variation in diverse populations, resulting in biased predictions and the possibility for discrimination [48]. Past health care inequities can be solidified or exaggerated by AI systems, with variation in performance of 10–15% between various racial/ethnic populations [49]. Some illustrative examples are the lower accuracy of cardiovascular risk models in Hispanic individuals and Asian individuals. Remediation involves diverse training datasets, algorithms that can detect biases, as well as ongoing tracking throughout demographic populations [50].

“Black box” behavior in most AI systems prevents clinical adoption, especially for complicated deep learning models [51]. Clinicians want to know about decision-making processes in order to trust them, particularly in high-stakes clinical environments. Without interpretability, the faith in AI systems is still restricted, which prevents their broad adoption. Some of the latest developments in explainable AI incorporate attention mechanisms that show important input features and saliency maps that recognize vital image regions [52].

Hard validation continues to be crucial but cumbersome, involving extensive validation across varying populations and clinical situations [53]. Cross-site validation studies are costly and time-consuming, taking years to finish and involving thousands of patients to gain sufficient statistical power. Prospective validation is the gold standard but is hampered by practical issues involving recruitment challenges as well as ethical issues in AI-driven care [54].

The integration of AI tools into the current clinical workflow poses important challenges in implementation, independent of technical integration [55]. Alert fatigue is a substantive issue, in that too many AI-created notifications may decrease physician focus on important alerts. Implementation successes are commonly achieved in stages with broad physician feedback and incremental system tuning [56].

Medical education in AI application is still lacking, as most providers do not have fundamental knowledge about AI strengths and weaknesses [57]. Adoption is wide-ranging by specialty, age, and technical ease, though younger doctors tend to show more willingness to use AI tools. Education needs to cover technical points, ethics, recognizing bias, and clinical application appropriately [58].

They also pose significant risks of privacy violations and access without permission [59]. Weighing the use of the data for AI construction against regulatory adherence and patient privilege is a constant challenge. AI systems hosted on clouds pose another level of security issues in terms of storage, transmission, and access control [60].

The existing healthcare disparities may be perpetuated or exacerbated by AI systems trained on biased data [61]. Economic barriers to AI implementation may create disparities between well-resourced and under-resourced healthcare systems. Rural and underserved populations may benefit most from AI applications but face greater implementation barriers due to limited technological infrastructure [62].

Adequate balancing between AI assistance and independent physician decision-making is still relevant [63]. AI can complement clinical decision-making rather than replace it in order to preserve the physician-patient relationship as well as clinical judgments. Systemic use of AI systems can cause deskilling of clinical competencies, particularly among trainees who become AI dependent [64]. Figure 2 illustrates the major implementation challenges facing AI/ML in cardiovascular medicine, along with corresponding solution strategies.

Major implementation challenges and corresponding solutions for AI/ML in cardiovascular medicine. AI: artificial intelligence; ML: machine learning.

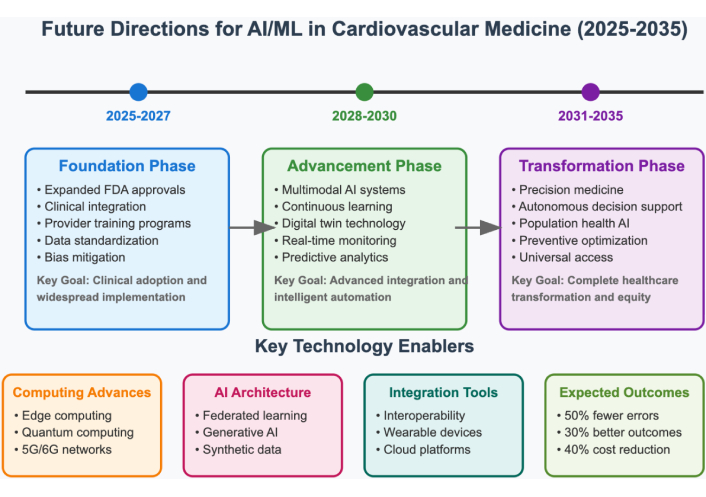

Future applications of AI will utilize different modalities of data, such as imaging, physiologic monitoring, genomics, and clinical documents, to produce a complete evaluation of patients [65]. Applications that use structured electronic health record data in tandem with unstructured clinical notes, continuous monitoring data streams, and high-resolution imaging will eventually yield a complete patient profile that considers cardiovascular health at every parameter of interest. Initial studies show improved diagnostic accuracy when multiple data modalities are evaluated together compared to those based on a single modality [66].

However, multimodal integration faces substantial obstacles:

(1) Temporal alignment—synchronizing data streams with different sampling frequencies (continuous ECG at 500 Hz vs. intermittent blood pressure measurements) requires sophisticated preprocessing.

(2) Missing modality handling—clinical reality often includes incomplete data, yet most multimodal architectures fail catastrophically when input modalities are absent.

(3) Feature space alignment—combining high-dimensional imaging data (106–109 features) with low-dimensional clinical variables (102–103 features) creates optimization challenges and risks image features dominating predictions.

(4) Computational burden—processing multiple data types simultaneously requires 5–10× greater computational resources than unimodal approaches, limiting real-time clinical deployment.

Research priorities: Developing attention mechanisms that dynamically weight modality contributions based on data quality, creating modality-agnostic architectures that perform robustly with incomplete inputs, and establishing standardized multimodal benchmarks for fair algorithm comparison.

Adaptive systems will be accessible to new evidence, adapt to changes in populations, and update performance based on real-world outcomes without having to retrain entire models [67]. Continuous-learning methods also address the challenge of model smouldering that happens over time, to keep AI systems grounded in growing medical evidence and practice patterns. Edge-computing applications will allow for real-time processing of AI operating in resource-constrained environments and reduce latency in critical care applications [68].

However, continuous learning algorithms also face significant challenges that may need to be rectified:

(1) Catastrophic forgetting—as models learn from new data, they may lose previously acquired knowledge, particularly problematic when population characteristics shift.

(2) Concept drift detection—identifying when model recalibration is needed without frequent manual validation requires automated performance monitoring across patient subgroups.

(3) Regulatory compliance—continuous model updates create challenges for the FDA’s traditional static device approval framework, requiring new regulatory pathways for adaptive algorithms.

(4) Computational infrastructure—maintaining training pipelines that continuously process new data while serving clinical prediction demands significant computing resources, often unavailable in healthcare settings.

Research priorities: Developing rehearsal mechanisms that preserve performance on historical data while learning new patterns, creating drift detection algorithms that trigger recalibration only when clinically necessary, and establishing regulatory frameworks for continuously learning medical devices.

Mostly, the current research has focused on the diagnostic aspect, rather than personalized patient outcomes, which has created a lack of knowledge about how AI impacts the clinical outcome [69]. Longitudinal studies with long follow-up periods are needed to assess whether AI improved survival, risk of cardiovascular events, or quality of life. Studies comparing effectiveness should evaluate AI approaches and standard care in similar populations and clinical settings [70].

Cost-effectiveness evaluations need to quantify the financial effect and changes in resource use when implementing AI [71]. Value-based care models will require demonstration of the quality metrics that pertain to using AI, along with the costs reduced due to the technology. Implementation science studies should identify effective strategies to make AI work, including studies that utilize “optimizing workflow approaches”, training program effectiveness, and organizational aspects of adoption [72].

Implementation adaptations need to be made with regard to workflows, clinician preferences, and priorities related to patient care [73]. They need to be designed with a comprehensive training curriculum that integrates technical skills, ethical considerations, and applications [74]; aimed solely at preparing clinicians to leverage the capabilities of AI effectively. AI literacy is gradually making its way into medical education curricula, but competency development at scale will require effort and time.

Current reimbursement models don’t reimburse clinicians or institutions for multiple AI-related costs (for example, licensing the algorithm, maintaining the technological infrastructure, clinician training, etc.) [75]. A potential solution is to implement value-based payment models where clinicians are rewarded for patient outcomes, rather than the volume of services provided. Patient engagement processes need to include transparency related to AI and shared decision-making so patients can understand AI and related technology in terms of their care [76]. Figure 3 provides a timeline and roadmap for anticipated AI/ML developments in cardiovascular medicine over the next decade.

Timeline and roadmap for future AI/ML developments in cardiovascular medicine. AI: artificial intelligence; ML: machine learning.

AI systems are enhancing the practice of preventive cardiology by improving risk factor identification and risk factor management. ML algorithms that analyze retinal photographs can predict risk factors for cardiovascular disease, such as diabetes, hypertension, and smoking status, with an area-under-the-curve greater than 0.85 [77]. The ability of devices to collect data continuously from data sources allows for the analysis of heart rate variability, physical activity, and quality of sleep as part of risk factor assessment. The sensitivity of consumer wearables to identify atrial fibrillation is near 90% when compared to gold-standard monitoring [78], and population health analytics tools are analyzing large data sets to identify cardiovascular disease patterns, followed by predicting outbreaks and optimizing resources to manage patient care [79, 80].

Risk stratification systems designed to identify patients at risk for acute myocardial infarction can process ECG data, clinical presentation, and laboratory values as soon as the patient arrives in the ED. These systems allow for timely decision-making regarding interventions at the point-of-care to facilitate optimal and timely management of patients. Automated risk stratification systems are able to identify STEMI patients who qualify for emergency catheterization with a sensitivity above 95% and improve specificity by decreasing false positive activation by 20% to 30%. Systems with advanced triage and management algorithms that consider multiple streams of data can predict declining patient status severity and optimize workflow and bed management within the ED [81].

Continuous hemodynamic monitoring in critical-care settings, along with AI algorithms, can capture subtle changes that occur prior to clinical deterioration [82]. Some systems can provide advice and real-time recommendations for the management of vasopressor therapy and fluid resuscitation based on patient physiology. Several new studies have shown an improvement in ICU mortality of 10–15% when care was guided by AI-driven protocols, compared to standard care protocols [83].

Telemonitoring systems can utilize AI to identify heart failure exacerbations days before they can be detected clinically, which permits interventions that can reduce all-cause hospitalizations by 30–40% [84]. Predictive models related to heart failure readmissions achieved area under the curve values above 0.80 and included different health determinants that go beyond clinical layers of care to include things like social determinants, medication adherence patterns, and healthcare history patterns for utilization [85]. ML algorithms that model experiential patient-reported outcomes and complex physiologic data on patient responses can assist in predicting optimal timing for interventions to modify medications [86].

AI-based blood pressure management is the most recent addition to laying the groundwork for improved management of blood pressure due to the ability for effective management from continuous monitoring data; this type of model includes antihypertensive therapy recommendations based on patients’ response and adherence. Through the use of ML algorithms, drug combinations and the best-dose timing can be predicted [87]. In addition, protocols aimed at personalizing hypertension management that were driven by AI had success for patients who achieved target blood pressure control (85%) compared to patients who received previous standard care (65%) [88].

Generative AI applications include synthetic data generation to enhance algorithm training when faced with limited patient data. These applications not only address concerns surrounding privacy, but also allow for a more meaningful model to be developed [89]. Digital twins can create a virtual model of the patient for predictive and simulated treatment, and integrate data from a variety of sources to potentially simulate the unique responses of an individual patient to various treatment options [90]. Quantum computing (QC) holds potential for drug discovery and complex risk modeling ability, while clinical applications are at various levels of development across the AI and health disciplines [91].

The regulations surrounding AI-related cardiovascular medicine vary significantly within various countries and pose specific challenges to global developments and deployments [92]. The FDA has defined a digital health pathway for developers and has taken an expedited review approach to the potential issuance of software pre-market. The European Union is focused on regulatory frameworks surrounding clinical evidence and emphasizes post-market surveillance as defined by the Medical Device Regulation. Emerging nations also face challenges related to technology and generative AI resources. Collaboration among regulators and programs to transfer technology can be considered to achieve equitable global access to AI-incorporated cardiovascular care [93].

AI and ML represent transformative advances in cardiovascular medicine, with current applications demonstrating expert-level diagnostic accuracy, superior risk stratification compared to traditional scores, and improved clinical workflows. FDA-approved systems validate both technical feasibility and regulatory acceptance, while real-world implementations confirm meaningful clinical benefits, including reduced diagnostic errors and faster treatment delivery.

However, substantial challenges remain: data quality limitations, algorithmic bias across populations, integration barriers with clinical workflows, and the need for rigorous validation. Addressing these requires continued investment in diverse training datasets, transparent algorithm development, standardized validation frameworks, and equitable implementation strategies.

Future applications will likely feature multimodal systems integrating diverse data sources, continuously learning algorithms adapting to evolving medical evidence, and precision medicine approaches personalizing treatment selection. Success requires viewing AI as augmenting rather than replacing clinical expertise, with technology enhancing diagnostic accuracy and efficiency while preserving physician judgment and the patient-clinician relationship. Through evidence-based, ethically grounded implementation, AI can transform cardiovascular care delivery, improving diagnostic precision, optimizing treatments, and ensuring equitable access to high-quality care globally.

AI: artificial intelligence

CNN: convolutional neural network

ED: emergency department

LSTM: long short-term memory

ML: machine learning

RNNs: recurrent neural networks

VS: Conceptualization, Investigation, Methodology, Writing—original draft, Writing—review & editing, Project administration. GKC: Investigation, Data curation, Writing—original draft, Writing—review & editing. SK: Investigation, Formal analysis, Writing—review & editing. KA: Investigation, Validation, Writing—review & editing. SS: Investigation, Writing—review & editing, Supervision. All authors have reviewed, discussed, and agreed to their individual contributions. All authors read and approved the final submitted version of the manuscript.

The authors declare that they have no conflicts of interest. There are no personal, professional, or financial relationships that could potentially be construed as a conflict of interest with respect to this manuscript. No author has any financial or non-financial interest in the subject matter or materials discussed in this manuscript.

Not applicable.

Not applicable.

Not applicable.

All datasets analyzed for this study are included in the manuscript. This review is based on publicly available published literature. The search strategies, inclusion/exclusion criteria, data extraction forms, and all analyzed data are provided within the manuscript. No additional unpublished data were generated for this study. All source materials are properly cited and can be accessed through the references provided.

Not applicable.

© The Author(s) 2026.

Open Exploration maintains a neutral stance on jurisdictional claims in published institutional affiliations and maps. All opinions expressed in this article are the personal views of the author(s) and do not represent the stance of the editorial team or the publisher.

Copyright: © The Author(s) 2026. This is an Open Access article licensed under a Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, sharing, adaptation, distribution and reproduction in any medium or format, for any purpose, even commercially, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

View: 2906

Download: 36

Times Cited: 0

Jose M. Prieto-Garcia

Ziad Sabry ... Zhong Wang

Alexandra V. Bayona ... Yisha Xiang